By Mathias Paul Babin, PhD candidate, Western University

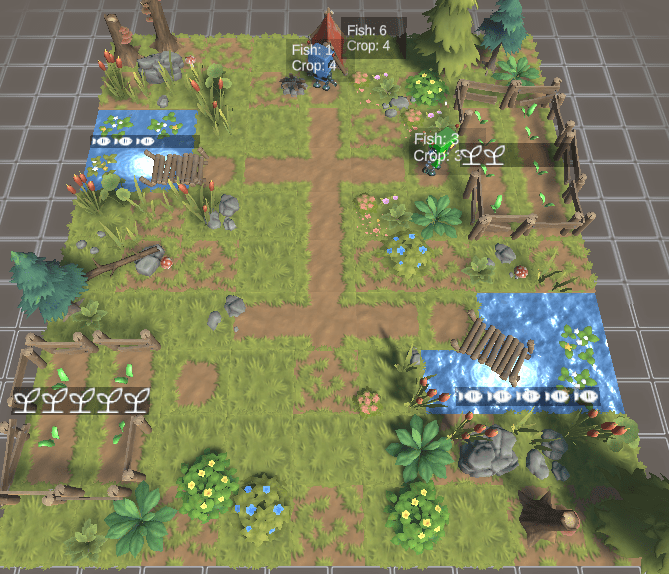

This environment shows how two agents not only learned how to share resources but extract them in a sustainable way.

See https://play.unity.com/mg/other/webgl-builds-360118

For a little bit of background on what is happening here:

- Agents are tasked with surviving for as long as possible. This means that they receive a positive reward of +1 for every second that they are alive. An Agents “dies” when they run out of fish or crops.

- Agents actions include gathering fish, crops, or trading one resource for another at a designated trading post. In addition to this, agents are provided with a role as either a fisher or farmer, when gathering resources they extract a bonus resource based on their role. This is meant to represent some form of proficiency at a task.

- The environment itself can run out of resources if over extracted. A pond can run out of fish if there are less than 2, and field will stop producing crops if all of them are extracted.

- Based on this setup the AIs learned to harvest resources efficiently by adhering to their given role, they learned to trade resources with one another, and they learned to rotate to other locations as to not deplete the land. The outcome is a simulation where agents learned to survive indefinitely when working cooperatively all with a selfish motivation to survive for as long as possible.

On the more technical side of things, these agents both share the same brain so to speak, that is a single model (neural network) is being trained here, but they each have their own instance or copy of it, and thus make decisions independently of one another.

Optimization of this model is performed using PPO, a state of the art reinforcement learning algorithm (I believe ChatGPT used this as well).

What’s interesting about this outcome is that agents have no form of communication and are acting selfishly, not to mention they are not directly instructed on how to behave, yet all of this sophisticated behaviour emerges none the less.